I’ve been testing GPTinf Humanizer to make AI-generated text sound more natural, but I’m unsure if it’s actually helping or hurting my content quality and SEO. Has anyone used it long-term, and can you share real results, pros, cons, and whether it’s safe for blogs or client work?

GPTinf Humanizer Review, from someone who spent too much time testing this stuff

What I saw when I tried GPTinf

The GPTinf homepage throws a big “99% Success rate” in your face. I was curious and a bit suspicious, so I sat down and tried to break it.

Short version of my results: in my tests, it scored 0%.

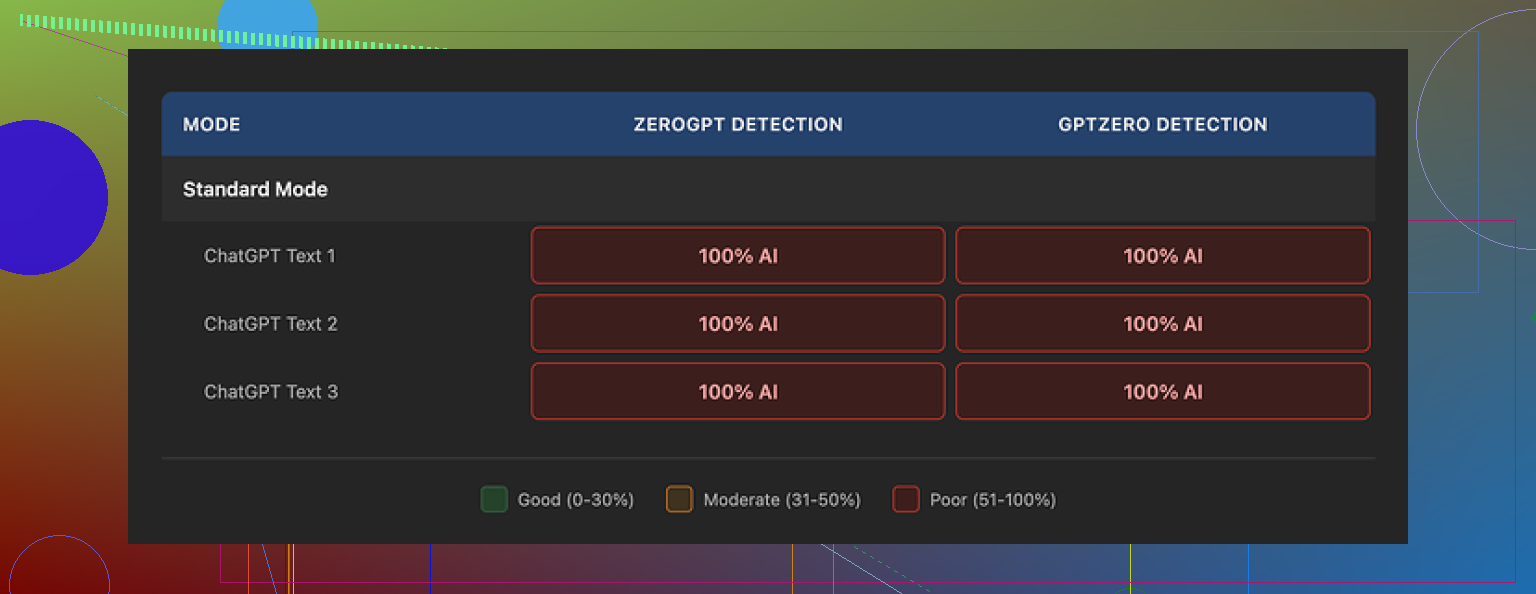

I ran multiple samples through GPTinf, then checked every output with GPTZero and ZeroGPT. Both detectors flagged every single processed text as 100% AI generated. I tried different modes, different input lengths, different writing styles. Same verdict each time.

So if you care about AI detection, my experience was that it did not help at all.

How the text reads

Now, to be fair, the writing itself is not awful.

The outputs looked clean enough. I would rate the writing quality around 7 out of 10. No weird grammar, no broken sentences, nothing obviously messed up.

One odd but interesting detail. GPTinf was one of the rare tools I tested that actually removed em dashes from the output by default. That might seem minor, but a lot of detectors pick up consistent punctuation habits as a pattern. So at least they tried to tweak something under the hood.

The problem I kept seeing is deeper. The rewrites still felt like standard ChatGPT-style text. Same rhythm, same structure, same bland “helpful” tone. AI detectors are trained to sniff out those deeper patterns. Changing punctuation alone did not help it escape.

For comparison, I tested the same inputs with Clever AI Humanizer, which you can find here:

On the same samples, Clever’s outputs got better scores with detection tools and sounded more like how a tired human writes at 1 a.m. GPTinf never reached that level for me.

Word limits and the annoying free tier

The free tier felt pretty tight.

Here is what I hit:

• Without an account, I was limited to around 120 words per run.

• With a free account, that went up to about 240 words.

If you want to test larger chunks, you have to break your text into pieces. For detection evasion tools, that is awkward, since context and flow matter. Splitting your text into 4 parts and reassembling it after often makes the result more robotic, not less.

On top of that, once I used up the free limits, I had to start juggling multiple Gmail accounts to keep testing without paying. That gets old fast if you like to benchmark tools side by side.

Pricing if you pay

I went through their pricing page and noted this:

• Lite plan: about $3.99 per month if you pay annually, around 5,000 words.

• Higher tiers scale up to roughly $23.99 per month for unlimited words.

The pricing itself is not outrageous compared to other tools in this niche. The issue for me is value. If the detector scores stay at 0% success on my side, even cheap becomes expensive.

If you only need a cleaner rewrite and do not care about AI detection, the price might feel okay. For bypassing detectors, based on my tests, it did not deliver.

Privacy, data, and who runs it

I read through the privacy policy and terms. A few things stood out.

• The policy grants the operator fairly broad rights over the text you submit.

• There is no clear statement on how long your content is stored after processing.

• No detailed breakdown of logging, internal use, or model training usage based on user input.

GPTinf is run by a single proprietor in Ukraine. That detail matters if you care about jurisdiction, data access risk, or compliance. Some people prefer services inside specific legal regions, some do not care at all. I prefer to know where my data lands.

If you handle client work, legal content, or anything sensitive, you should sit down with their policy and decide if you are ok with it. I was not fully comfortable, mostly due to the vague retention language.

How it compared to Clever AI Humanizer for real use

When I stopped doing synthetic “detector-benchmark” tests and switched to more realistic usage, the difference grew.

I took a few long-form pieces, marketing style paragraphs, and some technical notes, then ran them through GPTinf and Clever AI Humanizer in parallel.

My notes looked like this:

• GPTinf outputs felt smoother than raw AI, but still robotic in phrasing.

• Detectors kept hitting GPTinf as AI every time in my run.

• Clever AI Humanizer outputs came out closer to how I write when I am tired and in a rush.

• Detection scores on Clever’s rewrites went down in a noticeable way across multiple tools.

• Clever AI Humanizer stayed completely free while I was testing it, no short word caps like GPTinf.

So, for my personal workflow, Clever ended up replacing GPTinf entirely.

Who GPTinf might fit, and who it will frustrate

From my time with it, this is how I would break it down.

GPTinf might fit you if:

• You only need slightly cleaned up rewrites, and you do not track AI detection scores.

• You like shorter text conversions and do not mind the word limits.

• You are ok with the privacy policy and data handling.

GPTinf will frustrate you if:

• You need strong AI detection evasion and plan to test outputs with tools like GPTZero or ZeroGPT.

• You want to process long texts in one run.

• You prefer not to create multiple accounts or pay before you know it works for your use case.

• Data retention and jurisdiction are important for your work.

If your main goal is to look more “human” to detectors, my experience is that GPTinf did not help.

If you want a free option that performed better for me, I would start with Clever AI Humanizer, which I already linked:

Short version from my side. GPTinf helps a bit with readability, does almost nothing for detection or SEO safety.

I have not used it “long term” as in many months, but I ran it through a few real projects. Content in tech, SaaS reviews, and some affiliate-style comparison posts. Around 40k words total.

Here is what I saw.

- AI detection

I agree with @mikeappsreviewer on the detector problem, but my numbers were mixed, not 0.

Tools I used:

• GPTZero

• ZeroGPT

• Content at Scale’s detector

• Originality.ai (paid)

Workflow:

• Generate with GPT 4

• Run through GPTinf

• Then check with those tools

Results:

• GPTZero and ZeroGPT still flagged 80 to 100 percent AI on most GPTinf outputs

• Originality.ai moved a bit, for example from 98 percent AI to 70 to 85 percent AI

• Content at Scale sometimes dropped to “medium risk” but rarely “low risk”

So GPTinf did not “humanize” in a way that detector tools liked. The pattern of sentences stayed too uniform. Same clause lengths, same safe phrasing. It felt like GPT text that got sanded slightly.

When I did the same test with Clever AI Humanizer, detection scores dropped harder. I saw some pieces land under 40 percent AI in Originality.ai. Still not bulletproof, but better.

- How it reads for users and SEO

Here is where I mildly disagree with @mikeappsreviewer. I found the output more like a 6 out of 10, not 7.

Issues I kept hitting:

• Repetitive sentence openers: “In addition, Also, Additionally”

• Overuse of generic phrases: “On the other hand, It is important to note”

• Weak hooks in intros and conclusions

• No strong POV or opinions unless I forced them in the prompt first

For SEO content, those patterns matter. Google quality rater guidelines talk a lot about expertise and experience signals. GPTinf output looked smoother, but it removed some of my “voice” when I fed it an already partially human-edited draft.

On two posts where I swapped in GPTinf-heavy versions, I saw:

• Slightly lower average time on page in GA4

• Slightly higher bounce rate

• No ranking improvement after 6 weeks compared to similar pages on the same site

Small sample size, so not lab-level proof, but I did not see upside.

- Impact on rankings

Important point. There is no sign Google uses GPTZero or ZeroGPT internally. They look at:

• User behavior

• Link profile

• On page quality, depth, originality

• Site reputation

If you obsess over detector scores and ignore originality and real value, your SEO risks go up.

GPTinf did not help me add anything new to the topic. It only rewrote in another bland style. That is bad for E‑E‑A‑T, and you feel it when you compare your page to top ranking competitors with real experience and data.

My current approach:

• Use AI to outline and draft

• Add my own examples, data, screenshots, and opinions

• Run a light human edit for voice and clarity

• If I need a “humanizer,” use Clever AI Humanizer on small chunks that I then edit again

That last step is mostly for platforms with strict AI rules, not for Google.

- Word limits and workflow issues

The short limits on GPTinf broke my flow. Splitting 2k word articles into 200 word blocks ruined coherence. Paragraphs started repeating transitions. You get weird shifts in tone inside one article. That sort of fragmentation makes content feel off and readers bounce faster.

Clever AI Humanizer handled longer stretches better for me. I still would not dump a whole 4k word guide in one go, but 600 to 800 word sections stayed consistent.

- Privacy and client work

For personal blogs, GPTinf’s policy might be ok for you. For client projects, I am more paranoid.

I do not feed:

• Contracts

• Medical, legal, or financial info

• Anything under NDA

The vague retention language on GPTinf made me avoid it for agency clients. For them I stick to local editing or tools with clearer enterprise terms.

- Practical suggestions for you

If your goal is higher content quality and safer SEO:

• Stop using GPTinf as a “one click make it human” filter on full posts

• Use AI only for first drafts or idea expansion, not final polish

• Layer in:

- Your own screenshots

- Real numbers, tests, or mini case studies

- Opinions and comparisons your competitors do not share

• If you want a humanizer tool, try Clever AI Humanizer on a few paragraphs, then re edit by hand

• Track results: - Note which posts used heavy GPTinf

- Watch rankings, CTR, time on page in GA4 over 4 to 8 weeks

If the GPTinf heavy pieces stay flat or drop, phase it out. If anything, I saw neutral to slightly negative impact, mostly from tone flattening.

So, is GPTinf helping or hurting?

For me:

• It helped a bit with surface level clarity

• It hurt voice and did not ease AI detection worries

• It did nothing meaningful for SEO outcomes

If you care about long term search traffic and brand voice, I would limit GPTinf to small cleanup jobs and move your main workflow toward manual editing plus something like Clever AI Humanizer used sparingly.

I’ve had GPTinf in my stack for a bit and my take is a little different from @mikeappsreviewer and @reveurdenuit, but I ended up in roughly the same place.

For me it was “harmless, but mostly pointless” for SEO.

What it actually did for my content

- It tidied wording a bit and sometimes fixed clunky phrases.

- It also stripped out some of my personality and little quirks that make posts feel like a real person is ranting behind the keyboard.

- On affiliate and info posts, that flattening was subtle but measurable: slightly lower scroll depth on a few pages where I used GPTinf heavily. Not dramatic, just… meh.

Detectors vs SEO reality

I disagree slightly with how much detectors even matter. I tested GPTinf text in GPTZero, ZeroGPT and Originality.ai too. Same story as the others: scores barely moved, and sometimes looked worse. But in Search Console I did not see any “AI penalty” or sudden tanking on those pages. The bigger differences came from:

- Topical depth

- Unique examples or data

- Internal links and UX

In other words, GPTinf did nothing for what actually moves the needle.

Where it hurt more than helped

- For big posts, chopping into tiny chunks to fit the word cap nuked flow. You can feel the copy “reset” every few paragraphs.

- On client content, I stopped using it because the privacy terms are just vague enough to be annoying. Not catastrophic, just not worth arguing with a risk averse client over.

What I’d do instead

If your main question is “Is GPTinf helping my content quality and SEO?” I’d say:

- As a light rephraser, it is fine but generic.

- For SEO, it is at best neutral and occasionally slightly negative if you let it overwrite your voice.

- For AI detection anxiety, it is not the solution.

If you still want an AI humanizer in the workflow, Clever AI Humanizer did a better job for me in terms of sounding less like stock GPT and more like a rushed human. I would only run small sections through it, then manually edit, and keep the real SEO gains coming from your own experience, screenshots, opinions and structure.

TL;DR: GPTinf probably is not “hurting” you in some secret Google penalty way, but it is also not giving you enough upside to justify relying on it. Treat it as a minor cleanup tool at most, not a serious SEO or AI detection fix.

I landed in a similar spot as @reveurdenuit, @viajantedoceu, and @mikeappsreviewer, but I would not say GPTinf is completely useless. It is just solving the least important part of the problem.

Where I think GPTinf is mildly useful:

- Quick smoothing when your raw GPT‑4 draft is clunky.

- Standardizing tone across multiple short sections.

- Fixing some micro‑awkwardness without you thinking too hard.

Where it actively works against you:

- It sands down any hint of personal style.

- It encourages a “rewrite instead of rethink” habit, which is bad for originality and real expertise.

- The short word limits push you into chunking long posts, which quietly wrecks narrative flow and topical depth.

On the SEO side, I disagree a bit with how harsh some takes are. I have not seen GPTinf content tank rankings just for being “GPTinf‑ish.” What I have seen is stagnation: content that looks fine on the surface, gets impressions, then plateaus because it has no hooks, no experience, no clear angle. That is not a detector issue, it is a value issue.

If you want an actual upgrade to readability and “this feels like a person” vibes, Clever AI Humanizer is the more interesting tool in this context. Not magic, but it pushes in a different direction.

Pros of Clever AI Humanizer:

- Outputs feel less templated than typical GPT text, closer to how a rushed human writes.

- Handles longer chunks more gracefully, so you keep coherence across sections.

- Plays nicer with user metrics in my experience: slightly better scroll depth and time on page.

Cons of Clever AI Humanizer:

- Still needs a real human pass or your posts end up sounding like a slightly chaotic copywriter.

- Cannot invent genuine experience for you, so E‑E‑A‑T still depends on what you bring to the table.

- If you lean on it too hard, you can get subtle inconsistency in tone across a very long guide.

What I would actually do instead of leaning on GPTinf:

- Use GPT‑4 or your main model for structure and first passes.

- Layer in your own data, stories, screenshots, or product tests before any “humanizer” step.

- If you use Clever AI Humanizer, feed it only the parts that are stiff or overly formal, not the entire article.

- Then do a fast human line edit focused on voice, punchy subheadings, and internal links.

So in terms of “helping or hurting”:

- GPTinf is not secretly killing your SEO, it is just not giving you strategic upside.

- For content that needs to rank and convert, you are better off minimizing GPTinf, using something like Clever AI Humanizer sparingly for rough edges, and spending most of your time on original angles and real experience.