I’ve been testing the Monica AI humanizer for rewriting my content, but I’m not sure if it’s actually improving quality or just changing wording. Has anyone used it long term and can explain how well it passes AI detectors, affects SEO, and keeps a natural human tone? I really need help deciding if I should rely on it for client projects.

Monica AI Humanizer review, from someone who tried to break it on purpose

Quick context

I’ve been going through different AI “humanizers” and feeding them the same source text, then hammering the outputs with detectors like GPTZero and ZeroGPT. Monica AI Humanizer was one of them.

You access it here:

https://cleverhumanizer.ai/community/t/monica-ai-humanizer-review-with-ai-detection-proof/33

Monica is mainly an AI suite with chat, images, video, etc. The humanizer is more like a side attachment than the main engine. I went in with pretty low expectations and it still surprised me, in a bad way.

Controls and workflow

Here is how the “workflow” goes:

- Paste your text

- Press one button

- Get output

- No way to tune anything

No tone options.

No strength or “level” slider.

No different modes.

You get what it gives you. If the result trips detectors, there is nothing to tweak except regenerating and hoping.

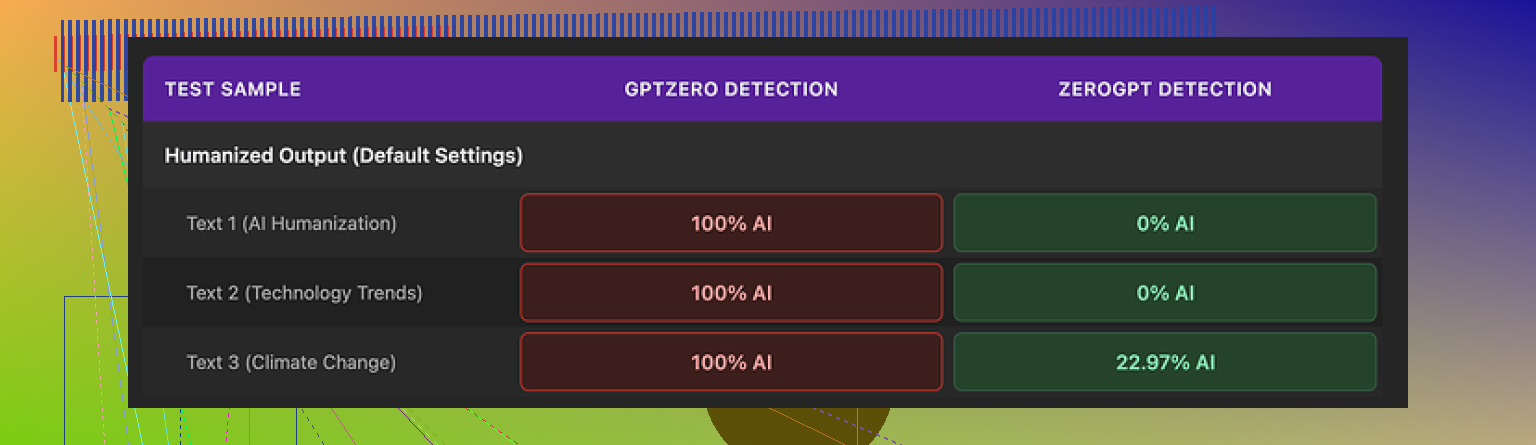

Detector tests

Here is what I ran:

Source text: clean AI-written paragraph, no mistakes, no weird formatting.

I passed the Monica output through:

• GPTZero

• ZeroGPT

My results:

• GPTZero: flagged every Monica output as 100% AI. All of them.

• ZeroGPT: two samples came back at 0%, one sample around 23% AI.

So you end up with a split:

ZeroGPT sometimes says “this looks human enough”.

GPTZero says “nope, all AI”.

If your teacher, editor, or client uses GPTZero, this tool puts you in a bad spot. You have no way to nudge the text in a safer direction, since there are no controls at all.

Writing quality

I rate the writing around 4/10.

Here is what I saw:

• It introduced new typos into clean text.

Example: “But” became “Ubt”. I did not mistype that, the tool did.

• It randomly messed with punctuation.

It added apostrophes that were not needed and left others missing.

• One output started with “[ABSTRACT” for no reason.

The original had nothing like that. It looked like a half-broken academic template.

• It kept em dashes from the original AI text and seemed to add new ones.

Since a lot of detectors and human readers associate overuse of em dashes with AI text, this is the opposite of useful for “humanizing”.

In other words, it does not improve flow much, introduces surface-level noise, and still looks AI enough to fail GPTZero every time.

Pricing and where it fits

Pricing for Monica’s Pro plan (billed annually) starts around $8.30 per month.

Important detail: that price is for the whole Monica platform, not for the humanizer alone. You get:

• Chatbot

• Image generator

• Video tools

• Other AI utilities

• Plus the humanizer as a small feature buried in there

If you already use Monica for chat or images, the humanizer feels like a free side toy. In that case, nothing to lose by trying it, as long as you do not rely on it for serious detection-sensitive work.

If your main need is detector evasion, paying for Monica for this specific feature looks like a bad trade.

How it compares to other tools

I ran the same tests across different humanizers using the same input text and the same detectors.

In my runs:

• Clever AI Humanizer produced more natural phrasing

• It avoided those strange typos

• Detector scores were more stable, especially on harder detectors

• It did not require payment

You can see details on the same page:

https://cleverhumanizer.ai/community/t/monica-ai-humanizer-review-with-ai-detection-proof/33

So if your goal is:

• Lower detection risk across tools like GPTZero

• Cleaner text without random “[ABSTRACT” or “Ubt”

• No subscription tied to a full AI suite

Then Monica’s humanizer is not a good primary option based on the tests I ran.

When it makes sense to use Monica’s humanizer

From my runs, I would only use it in these cases:

• You already pay for Monica for other stuff and want quick, low-effort tweaks.

• You do not care much about GPTZero specifically, and your checker is closer to ZeroGPT behavior.

• You manually proofread and fix the output afterward, including the typos and weird additions.

If you need something to pass unpredictable detectors and you do not know which tool your content will face, this one feels too risky.

I’ve run Monica’s humanizer for a few weeks on blog posts and technical docs. Short version: it changes wording, it does not improve the writing in any reliable way, and it performs poorly against stricter detectors.

What I saw on my side:

- AI detection performance

- GPTZero: same as what @mikeappsreviewer saw. My samples stayed flagged as AI most of the time, even when I shortened and simplified the text first.

- ZeroGPT: scores bounced around. Some went low, others stayed high, with no clear pattern.

- Other detectors: On random free checkers, it sometimes passed, sometimes failed. No stable behavior.

If you need lower risk across unknown detectors, it is weak. It helps a little in some cases, but you cannot trust it.

- Writing quality

- It often swapped words without fixing structure or flow. So the text felt slightly different, not better.

- I also got weird typos and odd punctuation. Needed a careful proofread every time.

- It did not adapt tone well. Academic text stayed stiff. Marketing copy stayed robotic.

If your goal is better style, you are better off editing your own work or using a normal paraphraser then editing.

- Practical use cases

Good for:

- Quick variation of a paragraph when you already plan to hand edit.

- Low stakes content, like internal notes or first drafts.

Bad for:

- Essays that face GPTZero or similar tools.

- Client work where you promise “human written” content.

- Situations where you need consistent, natural tone.

- What I do now

My current workflow looks like this:

- Write or generate the base text.

- Run it through a stronger humanizer when I care about detectors. Clever AI Humanizer did better in my tests. It felt more natural, had fewer random errors, and landed safer scores on tougher tools.

- Then I edit by hand for tone and clarity.

If you want to focus on passing AI detection with cleaner language, something like smarter human-style rewriting for your text is worth trying side by side with Monica. Run both on the same input, hit the same detectors, and compare.

- SEO friendly version of your topic

“Monica AI Humanizer Review: Does It Pass AI Detectors And Improve Content Quality?

I tested the Monica AI Humanizer on blog posts, essays, and long form content to see if it improves writing quality and helps pass AI content detectors like GPTZero and ZeroGPT. The tool changes wording, but often leaves sentence structure, tone, and flow untouched. In my experience, GPTZero still flagged most outputs as AI generated, and ZeroGPT results were inconsistent. Monica’s humanizer sometimes introduces typos and odd punctuation, so you need to proofread every result.

If you need reliable human style rewriting for SEO content, essays, or client work, and want better odds against AI detection tools, compare Monica with alternatives such as Clever AI Humanizer. This helps you see which service produces more natural language, fewer errors, and safer scores on common AI detectors.”

Same boat here. I’ve used Monica’s humanizer on and off for a couple months for school stuff and blog posts, and I’d say it’s mostly just… word shuffling.

I agree with a lot of what @mikeappsreviewer and @jeff saw, but my take is a bit different in use case:

- For pure quality, it rarely helped. It swaps synonyms, keeps the same stiff structure, and sometimes actually makes the sentence clunkier. I had to fix phrasing manually almost every time.

- For AI detection, my results were mixed. GPTZero usually still flagged it as AI. On some lighter detectors it sometimes slipped through, but it felt like luck, not design. If your teacher is strict or uses GPTZero, I would not rely on it.

- It did introduce weird artifacts for me too. Random commas, awkward transitions, once it randomly repeated a sentence fragment. So you must proofread.

Where I disagree slightly with the others: I don’t think it is completely useless. If you already pay for Monica and just want a fast “change this up a bit so it doesn’t look like I pasted straight from ChatGPT” for low stakes stuff, it’s… fine. For serious essays, client work, or anything where getting flagged would actually hurt you, it’s not enough on its own.

If your goal is more natural writing that has a better shot at passing detectors, something like smarter human-style rewriting for your text is worth trying side by side. In my tests it kept the meaning but changed structure more, which feels closer to how a real person would edit, and I got fewer “lol what is this typo” moments.

To make your topic easier to find and read, you might frame it like this:

“Monica AI Humanizer Review: Is It Actually Improving Content Quality or Just Rewording Text?

I have been using the Monica AI Humanizer to rewrite articles, essays, and other content to see if it makes my writing more natural and helps bypass AI detection tools. I want to know whether long term users feel it truly improves sentence flow, tone, and readability, or if it mainly changes wording without reducing AI detection scores. I am especially interested in how Monica performs against popular AI detectors such as GPTZero and ZeroGPT, and whether it is reliable enough for academic work, blog content, or client projects.”

If that’s your situation, I’d treat Monica as a quick variation tool, not a real “make this safe from detectors” solution.