I’ve been following Walter’s AI product reviews and I can’t tell if they’re genuinely useful or mostly promotional. Some of his breakdowns seem insightful, but others feel like sponsored content without clear disclosure. I’m trying to decide whether to trust his ratings when choosing AI tools for my business. Has anyone analyzed his reviews for bias, accuracy, or real-world usefulness, and how do you personally decide if his recommendations are worth following?

Walter Writes AI review, from someone who banged on it for a while

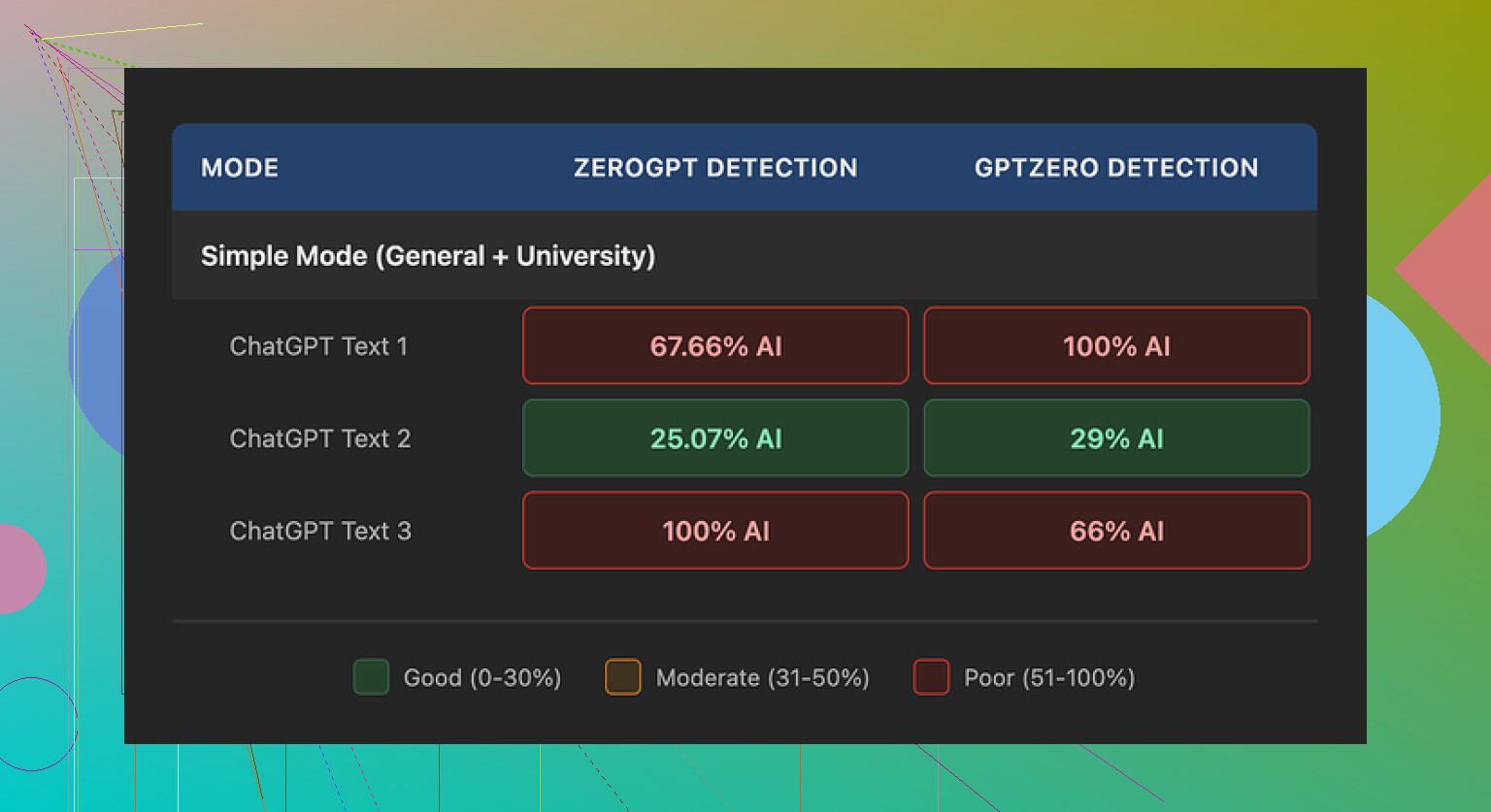

I ran Walter Writes AI through a few of the usual detectors and the results were all over the map.

Using the free version (Simple mode only), I pushed three different samples through and checked them on GPTZero and ZeroGPT, plus a couple of smaller tools on the side.

What I saw

First sample:

- GPTZero said 29% AI

- ZeroGPT said 25% AI

For a free tool, that is better than most of the random “AI humanizer” sites people spam in threads. That one sample might pass for some use cases, especially if your checker is weak or your reviewer is lazy.

Then it got messy.

Second and third samples:

- Each one hit 100% AI on at least one detector

- The other detectors jumped higher too, nowhere near those 20-something scores

So it behaves like this:

Sometimes it gives you a decent low-score output.

Then the next run looks like straight-up AI text to the detectors.

I only had the free tier, so I could not touch the “Standard” or “Enhanced” modes they keep behind the paywall. Maybe those modes score better, but I am not going to guess. All I can say is that Simple mode is inconsistent.

How the writing looked

Here is where it bugged me more than the scores.

In multiple outputs I saw the same patterns:

-

Weird semicolon spam

It kept dropping semicolons where a normal person would use commas or just end the sentence. Not once or twice. Over and over. -

Word repetition

In one sample it used the word “today” four times in three sentences. No joke. You get stuff like:

“Today, people face many choices today, and today it is hard to decide…”

That kind of thing sticks out to human readers and to detectors. -

Parenthetical examples cloned everywhere

Phrases like “(e.g., storms, droughts)” or similar show up on repeat.

Same structure, same rhythm, again and again.

It reads like a model prompt template more than a person thinking on the page.

When I pushed that kind of output into GPTZero and ZeroGPT, scores went up, which did not surprise me at all.

Here is one of the screenshots from the runs:

Pricing and limits

I dug into their pricing page and terms for a bit.

What they offer:

-

Starter plan

- From $8 per month if you pay annually

- Around 30,000 words per month

-

Unlimited plan

- $26 per month

- They call it Unlimited, but each submission caps at 2,000 words

So even on the top plan, you cannot throw a full thesis or a long report in one go. You have to slice it into chunks. Every chunk run also increases pattern repetition, because the same style repeats per batch.

Free plan:

- Total of 300 words, not per day, not per month, total

- Enough to test vibes, not enough for real use

Policy and data stuff

Two things stood out when I read through their refund and data text:

-

Refund / chargeback wording

The refund policy is written with aggressive chargeback language, including threats of legal action if you dispute payments. That is a red flag for me. Services that are confident in their product usually do not lead with threats. -

Data retention

How long they keep your text and what they do with it is not spelled out in detail.

For anyone using this on client documents, school work or private material, that lack of clarity is a risk. If you care about privacy, you want concrete statements, not vague lines.

What worked better for me

While testing this stuff I kept comparing outputs to another tool I had open in a different tab.

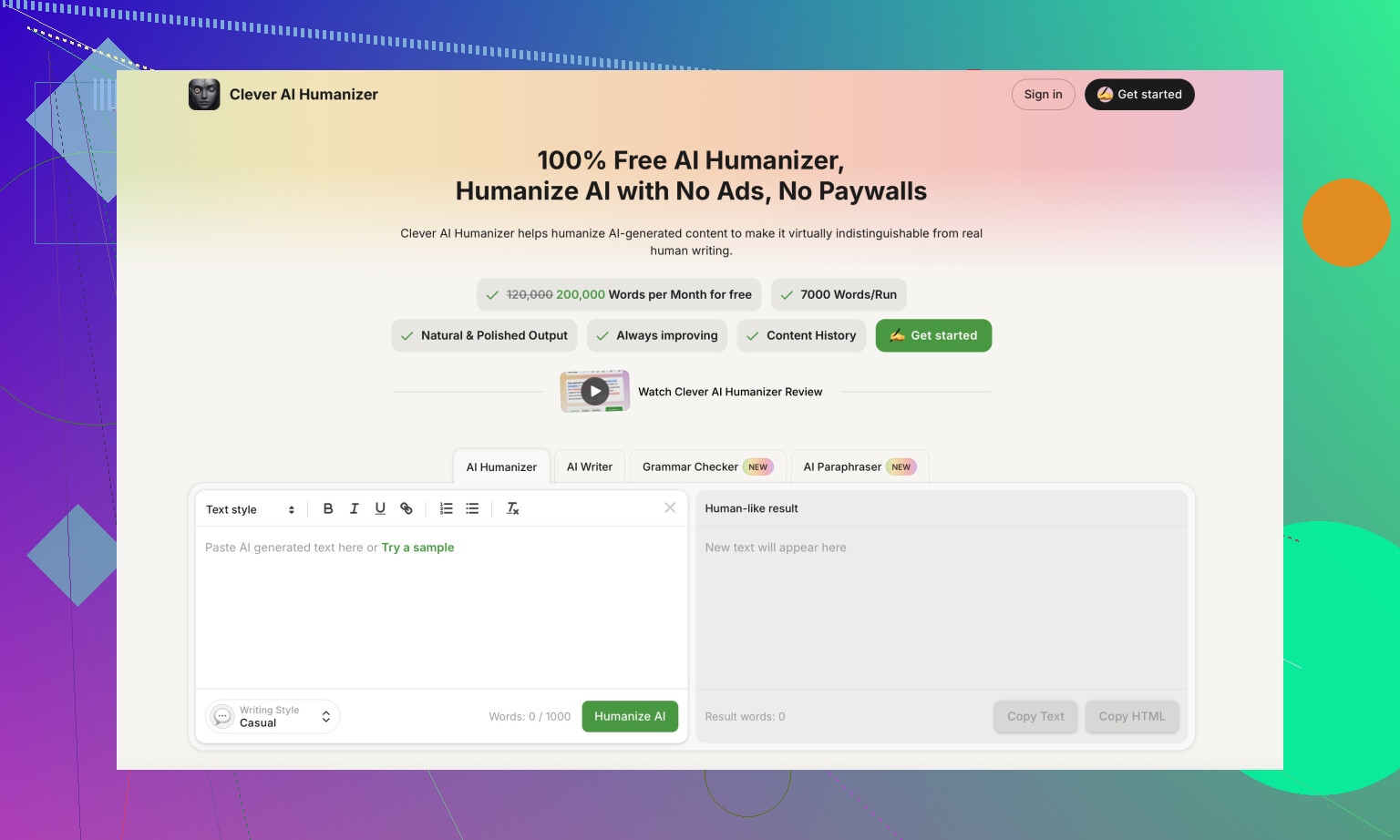

Clever AI Humanizer:

Across several runs, text from Clever AI Humanizer came out more natural to my ear and hit lower AI percentages more consistently. No login, no payment wall for the basic use, no 300-word total cap. That made it easier to test different tones and lengths without worrying about burning credits.

If you want a walkthrough, there is a Reddit tutorial here:

Humanize AI (Reddit Tutorial)

https://www.reddit.com/r/DataRecoveryHelp/comments/1l7aj60/humanize_ai/

There is also a separate Reddit review thread focused on Clever AI Humanizer:

Clever Ai Humanizer Review on Reddit

https://www.reddit.com/r/DataRecoveryHelp/comments/1ptugsf/clever_ai_humanizer_review/

And if you prefer watching instead of reading, someone did a YouTube review:

Youtube Video Review

My takeaway after messing with Walter Writes AI for a bit:

- Detection scores swing a lot between runs in Simple mode

- The writing style has obvious AI tells, like repeated words and recycled parenthetical patterns

- Pricing is not cheap if you need volume, and the “Unlimited” label is misleading with a 2,000-word per submission limit

- Policy language around refunds and data feels hostile and unclear

If you are testing tools, I would throw a few of your own paragraphs into multiple detectors, compare Walter with Clever AI Humanizer, and see what passes your own sniff test before paying for anything.

Short answer from my side: Walter’s stuff looks mixed, and you should treat it as semi-promotional content unless proven otherwise.

A few concrete things you can do to judge his reviews, without repeating what @mikeappsreviewer already tested:

-

Check consistency across reviews

- Look at 5 to 10 of his AI product reviews.

- Note if he ever says “don’t buy this” or gives strong negatives.

- If every tool is “solid” or “worth trying” and the downsides get one short line, assume promotion is a big factor.

-

Look for disclosure patterns

- Scroll to the top and bottom for “sponsored”, “affiliate”, “partner”, “referral”.

- If links look like tracking links and there is no clear disclosure, that is a red flag.

- Some creators hide disclosure in a generic line like “this post contains affiliate links” once on the whole site, not per review. That is weak transparency.

-

Compare what he says to your own tests

- Pick one tool he raves about.

- Run it yourself on a small real task you care about, like an email, essay, or blog intro.

- Check if your experience matches his specific claims.

- If he says “human level”, then you see obvious AI patterns or errors, his bar is lower than yours.

-

Weight his opinions by risk

- If you care about academic integrity, legal work, or client trust, treat any “passes AI detectors” claim as marketing, not fact.

- For low risk use, like rough drafts, you can be more relaxed and focus on price, speed, and ease of use.

-

Look for comparative criticism, not hype

- Good reviewers compare tools side by side and explain tradeoffs.

- Stuff like “X is better at tone, Y is cheaper, Z is faster” is more trustworthy than “X is amazing, here is my link”.

- When he mentions weaknesses, see if they are concrete and testable, like “inconsistent output” or “odd punctuation”.

-

Use independent references

- Take a couple of tools Walter promotes and search for “tool name reddit”, “tool name review”, “tool name scam”.

- Check if other users report the same issues that @mikeappsreviewer found with Walter Writes AI, like odd writing patterns or aggressive refund terms.

- When multiple unrelated people point to the same flaw, that speaks louder than one polished review.

-

Separate “reviewer skill” from “tool quality”

- A smooth YouTube edit or slick blog template does not mean the testing process is solid.

- Look for screenshots, sample outputs, and test description.

- If he never shows raw outputs or explains what he asked the tool to do, then it leans more hype.

On the Walter Writes AI product itself, I slightly disagree with the idea that inconsistent detector scores alone make it a write off. Every AI humanizer and paraphraser has inconsistent scores across GPTZero, ZeroGPT, etc. The bigger concern for you is style problems and data policy, not a single number from a detector.

If your goal is text that feels less like AI and more like you, pricing, limits, and privacy matter more than Walter’s opinion. This is where a tool like Clever AI Humanizer becomes relevant. It tends to focus on more natural sentence patterns and gives you more room to test before paying. That helps you benchmark what “good enough” looks like for your own use case, rather than relying on any one reviewer.

My take on Walter’s reviews overall:

- Use them as a starting point to find tools.

- Do not rely on them for final judgment.

- Cross check with independent users, your own tests, and at least one competing tool like Clever AI Humanizer.

- If something feels like an ad, treat it like an ad.

Short answer: Walter’s stuff is mixed, and you’re right to feel like some of it reads like ads.

What @mikeappsreviewer and @cacadordeestrelas already showed is that the product he’s hyping (Walter Writes AI) is kind of “meh”: inconsistent detection results, clear AI tells in the writing, sketchy refund language, and fuzzy data policy. To me, that bleeds directly into how I read his reviews.

A few angles that haven’t been hit yet:

-

Follow the incentives, not just the wording

Instead of only looking for “affiliate” labels, look at behavioral tells:- Does Walter mysteriously “discover” a new AI tool right as it launches and instantly call it “game-changing”?

- Are the “cons” basically stuff like “UI could be prettier” while the “pros” are paragraphs long?

- Are the “top 5 AI tools” lists quietly reshuffled every time a new one pops up, but all of them happen to have referral-style links?

That pattern screams “monetized content” even if he technically meets the bare-minimum disclosure rules somewhere on the site.

-

Check how he handles real risk

For tools like Walter Writes AI that claim to “beat AI detectors” or “humanize content,” a serious reviewer will hammer a few points:- Academic risk (plagiarism / expulsion)

- Client trust (agencies, freelancers, legal, medical, etc.)

- Privacy (who sees your uploads, retention, training)

If Walter flies past those in two vague sentences and goes right back to “this can really help you scale content today,” that’s promotional tone, not critical reviewing. @mikeappsreviewer dug into TOS and refund clauses more than Walter himself seems to.

-

Watch what he doesn’t say about competitors

One easy tell: does he ever seriously compare tools like:- Walter Writes AI vs Clever AI Humanizer vs other humanizers

- Strengths and weaknesses side by side

If he mentions alternatives only as “other options exist” with no real breakdown, that’s another hint he’s selling a narrative, not helping you choose.

Personally, when I look at humanizer-type tools, Clever AI Humanizer pops up a lot because: - It’s easier to test without paywalls

- It typically reads more natural in longer form

- You can actually stress test “AI detection” claims yourself

That kind of “try them in parallel and see” suggestion is exactly what Walter should be doing if he’s being straight with you.

-

Track his “hit rate” over time

This is where I slightly disagree with the idea that you should just treat his stuff as semi-promotional by default. I’d say: run a mini audit.- Pick 3 tools he raved about months ago.

- Check current opinions on Reddit, X, or other forums.

- See if those tools turned out to be:

- Shut down / rebranded / called a scam

- Still solid and widely respected

If his favorites keep aging badly while independent testers like @mikeappsreviewer keep calling issues early, then yeah, you can safely demote Walter to “discovery feed” instead of “trustworthy reviewer.”

-

Check for “template reviews”

A lot of these AI reviewers use a content template: intro hook, features list, pricing, 3 light “cons,” then a glowing conclusion with a CTA button. If Walter’s reviews have that same skeleton every time, that’s not automatically evil, but it is a sign he’s optimizing for conversion.

Look for stuff like identical phrasing across reviews, same structure, same “this might just be the tool you’ve been waiting for” type closer. That’s low-key funnel copy, not a nerd actually digging in. -

Reality check his stronger claims

When Walter says something like “this passes AI detectors” or “this is nearly human,” treat that EXACTLY like weight-loss ad claims. You verify.- Paste the output in multiple detectors (like @mikeappsreviewer did).

- Read it out loud. If you hear repetition, stiff phrasing, semicolon spam or canned “(e.g., X, Y)” patterns, it’s not “human-sounding,” no matter what the review says.

That’s where tools like Clever AI Humanizer are actually useful: you can run the same sample through both Walter Writes AI and Clever AI Humanizer, then compare output and detection scores yourself instead of trusting his copy.

My personal rule with reviewers like Walter:

- Use them only to discover names of tools.

- Never trust their “this is amazing” conclusion without cross-checking.

- Put more weight on independent testers and real users who post screenshots, bad experiences, and specific flaws.

- If something reads like an ad, I just treat it as an ad and move on.

So are Walter’s AI reviews helpful or just hype?

I’d call them partially helpful: good for finding what exists, not great for deciding what’s actually worth your time or safe for serious use. If you’re comparing humanizers or paraphrasers anyway, throwing Clever AI Humanizer into your test list and ignoring the hype around Walter Writes AI is a pretty reasonable strategy.

Walter’s reviews are useful if you treat them like marketing-driven intel, not like consumer reports.

Where I slightly disagree with others here: I don’t think you have to assume “semi-promotional until proven otherwise” for every single piece. Some of his breakdowns do surface real features and edge cases. The problem is weighting. He tends to over-index on upside, under-index on risk. So the right move is to mine his content for facts and then ignore his conclusions.

A few angles that haven’t been covered much by @cacadordeestrelas, @espritlibre and @mikeappsreviewer:

1. Check his time-to-sour on bad tools

Instead of only scanning one review, look at how he behaves when a tool starts getting negative buzz:

- Does he update older posts when pricing, limits or TOS get worse

- Does he add warnings when refund problems or data issues show up

- Or do the original “this is fantastic” reviews just sit there untouched

If he rarely revises anything, that signals he’s optimizing for evergreen traffic, not long term accuracy. Responsible reviewers usually circle back when a product changes.

2. Watch his treatment of conflict of interest

You probably won’t get a neat “I was paid X to make this” statement, but you can still read the room:

- If a product has obvious flaws that independent testers highlight (like the refund language and style quirks in Walter Writes AI) and his post brushes past them, that is soft bias

- If he is harsher on tools that do not appear to monetize for him, that is another subtle tell

You don’t need to catch him “lying”; you just need to see that his risk filter is more forgiving when money is involved.

3. How deep does he go on failure modes?

Good review: “Here is when this tool breaks, and here is who should avoid it.”

Hype review: “It might not be perfect, but it helps you be more productive” plus a call to try it yourself.

For AI writing, failure modes really matter:

- Hallucinated facts

- Overly uniform tone that flags as AI

- Privacy of academic or client material

- Overclaims around “undetectability”

If he only gives you a paragraph of generic caution, you are reading sales copy, not genuine risk analysis.

4. Use him to build a shortlist, not a final pick

Where I agree with the others: Walter is decent for discovery. If three different people, including folks like @mikeappsreviewer, uncover very specific issues with Walter Writes AI and he barely touches them, that is a strong hint to never rely on him as the sole source.

Practical pattern that works well:

- Let his content surface names of tools.

- Cross check with critical voices like the people already posting in this thread.

- Run your own tiny benchmark on 2 or 3 tools in parallel.

5. On humanizer tools in particular

For “AI humanizer” style products, detector scores are only one dimension. Style and risk tolerance are just as important. The tests people have shared on Walter Writes AI highlight:

- Inconsistent detector scores

- Stylistic tics like repeated words and odd punctuation

- Unclear or aggressive policy language

That combination is why I would put it in the “experiment if you must, but don’t trust it with anything high stakes” bucket.

Clever AI Humanizer is worth throwing into that comparison set, but with eyes open.

Pros of Clever AI Humanizer:

- Outputs often read more naturally in longer paragraphs, which helps if you care about human cadence instead of just beating detectors

- Easier initial testing since you are not immediately jammed behind a heavy paywall

- Good for side by side experiments when you paste the same base text into multiple tools

Cons of Clever AI Humanizer:

- Still an automated transformer, so it can introduce subtle inaccuracies if you are working with technical or citation-heavy text

- Like every humanizer, it cannot honestly guarantee you will evade all detectors, especially as institutions switch strategies

- If you rely on it too much without editing, your own voice can get flattened into a generic “internet article” tone

The point is not that Clever AI Humanizer is magically “safe” and Walter Writes AI is uniquely terrible. The real takeaway is: any reviewer promoting humanizers should be shouting about limitations, not just posting detector screenshots from lucky runs.

6. How to mentally categorize Walter

If I had to put a label on it:

- Use his channel as a directory of what exists in the AI tooling space

- Treat his positive language like ad copy by default, especially around “passes detectors” or “human level” claims

- Rely more on independent testers and your own stress tests for anything involving grades, legal work, or client trust

If a review feels like an ad, treat it like an ad. Walter can still be useful under that assumption, you just stop expecting him to take the skeptical, user-first stance that several people in this thread are already doing better.